In components one and two of this AI weblog collection, we explored the strategic concerns and networking wants for a profitable AI implementation. On this weblog I give attention to knowledge heart infrastructure with a have a look at the computing energy that brings all of it to life.

Simply as people use patterns as psychological shortcuts for fixing advanced issues, AI is about recognizing patterns to distill actionable insights. Now take into consideration how this is applicable to the info heart, the place patterns have developed over many years. You’ve cycles the place we use software program to resolve issues, then {hardware} improvements allow new software program to give attention to the following downside. The pendulum swings backwards and forwards repeatedly, with every swing representing a disruptive expertise that modifications and redefines how we get work carried out with our builders and with knowledge heart infrastructure and operations groups.

AI is clearly the most recent pendulum swing and disruptive expertise that requires developments in each {hardware} and software program. GPUs are all the fashion in the present day as a result of public debut of ChatGPT – however GPUs have been round for a very long time. I used to be a GPU consumer again within the Nineteen Nineties as a result of these highly effective chips enabled me to play 3D video games that required quick processing to calculate issues like the place all these polygons must be in house, updating visuals quick with every body.

In technical phrases, GPUs can course of many parallel floating-point operations quicker than customary CPUs and largely that’s their superpower. It’s value noting that many AI workloads will be optimized to run on a high-performance CPU. However in contrast to the CPU, GPUs are free from the accountability of creating all the opposite subsystems inside compute work with one another. Software program builders and knowledge scientists can leverage software program like CUDA and its growth instruments to harness the facility of GPUs and use all that parallel processing functionality to resolve among the world’s most advanced issues.

A brand new manner to take a look at your AI wants

Not like single, heterogenous infrastructure use circumstances like virtualization, there are a number of patterns inside AI that include completely different infrastructure wants within the knowledge heart. Organizations can take into consideration AI use circumstances when it comes to three predominant buckets:

- Construct the mannequin, for big foundational coaching.

- Optimize the mannequin, for fine-tuning a pre-trained mannequin with particular knowledge units.

- Use the mannequin, for inferencing insights from new knowledge.

The least demanding workloads are optimize and use the mannequin as a result of many of the work will be carried out in a single field with a number of GPUs. Probably the most intensive, disruptive, and costly workload is construct the mannequin. Usually, should you’re trying to prepare these fashions at scale you want an setting that may assist many GPUs throughout many servers, networking collectively for particular person GPUs that behave as a single processing unit to resolve extremely advanced issues, quicker.

This makes the community vital for coaching use circumstances and introduces every kind of challenges to knowledge heart infrastructure and operations, particularly if the underlying facility was not constructed for AI from inception. And most organizations in the present day are usually not trying to construct new knowledge facilities.

Subsequently, organizations constructing out their AI knowledge heart methods should reply essential questions like:

- What AI use circumstances do you’ll want to assist, and primarily based on the enterprise outcomes you’ll want to ship, the place do they fall into the construct the mannequin, optimize the mannequin, and use the mannequin buckets?

- The place is the info you want, and the place is the very best location to allow these use circumstances to optimize outcomes and decrease the prices?

- Do you’ll want to ship extra energy? Are your amenities in a position to cool a majority of these workloads with present strategies or do you require new strategies like water cooling?

- Lastly, what’s the influence in your group’s sustainability objectives?

The ability of Cisco Compute options for AI

As the overall supervisor and senior vice chairman for Cisco’s compute enterprise, I’m completely happy to say that Cisco UCS servers are designed for demanding use circumstances like AI fine-tuning and inferencing, VDI, and plenty of others. With its future-ready, extremely modular structure, Cisco UCS empowers our prospects with a mix of high-performance CPUs, non-obligatory GPU acceleration, and software-defined automation. This interprets to environment friendly useful resource allocation for various workloads and streamlined administration by means of Cisco Intersight. You may say that with UCS, you get the muscle to energy your creativity and the brains to optimize its use for groundbreaking AI use circumstances.

However Cisco is one participant in a large ecosystem. Expertise and answer companions have lengthy been a key to our success, and that is actually no completely different in our technique for AI. This technique revolves round driving most buyer worth to harness the complete long-term potential behind every partnership, which allows us to mix the very best of compute and networking with the very best instruments in AI.

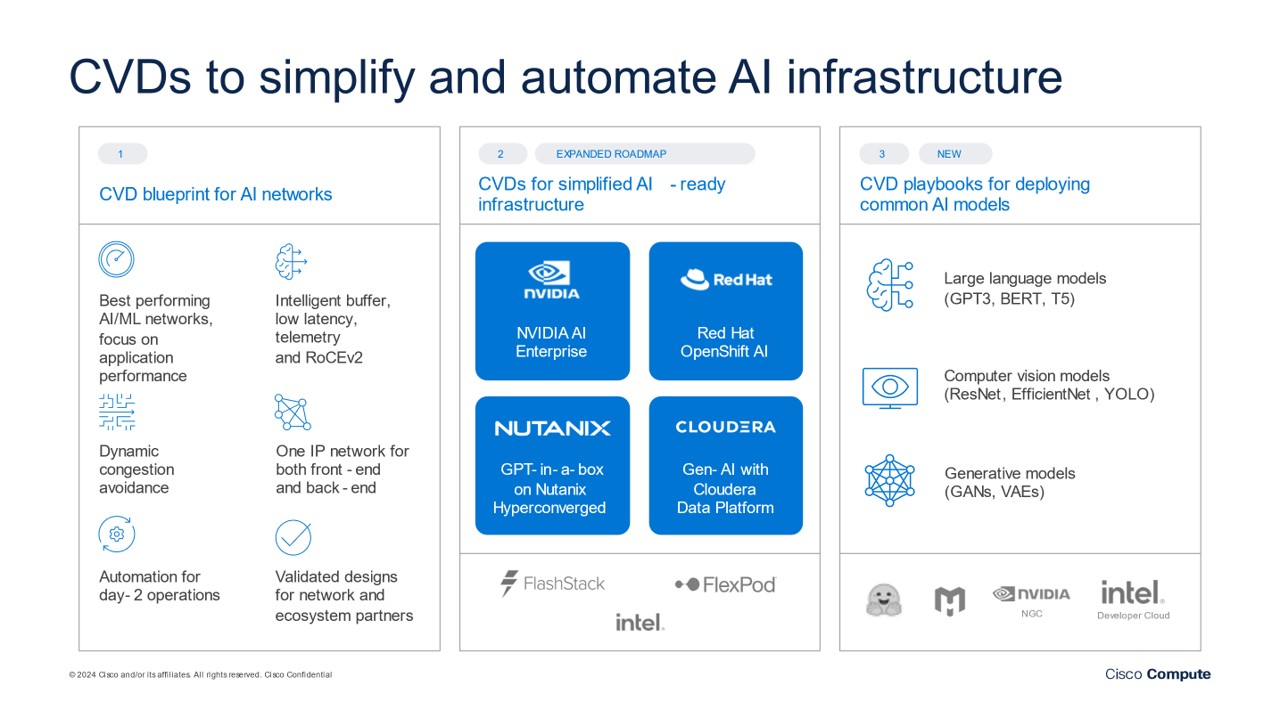

That is the case in our strategic partnerships with NVIDIA, Intel, AMD, Purple Hat, and others. One key deliverable has been the regular stream of Cisco Validated Designs (CVDs) that present pre-configured answer blueprints that simplify integrating AI workloads into present IT infrastructure. CVDs get rid of the necessity for our prospects to construct their AI infrastructure from scratch. This interprets to quicker deployment occasions and diminished dangers related to advanced infrastructure configurations and deployments.

One other key pillar of our AI computing technique is providing prospects a variety of answer choices that embrace standalone blade and rack-based servers, converged infrastructure, and hyperconverged infrastructure (HCI). These choices allow prospects to deal with quite a lot of use circumstances and deployment domains all through their hybrid multicloud environments – from centralized knowledge facilities to edge finish factors. Listed below are simply a few examples:

- Converged infrastructures with companions like NetApp and Pure Storage provide a powerful basis for the complete lifecycle of AI growth from coaching AI fashions to day-to-day operations of AI workloads in manufacturing environments. For extremely demanding AI use circumstances like scientific analysis or advanced monetary simulations, our converged infrastructures will be custom-made and upgraded to offer the scalability and adaptability wanted to deal with these computationally intensive workloads effectively.

- We additionally provide an HCI possibility by means of our strategic partnership with Nutanix that’s well-suited for hybrid and multi-cloud environments by means of the cloud-native designs of Nutanix options. This enables our prospects to seamlessly prolong their AI workloads throughout on-premises infrastructure and public cloud sources, for optimum efficiency and price effectivity. This answer can also be splendid for edge deployments, the place real-time knowledge processing is essential.

AI Infrastructure with sustainability in thoughts

Cisco’s engineering groups are centered on embedding vitality administration, software program and {hardware} sustainability, and enterprise mannequin transformation into all the pieces we do. Along with vitality optimization, these new improvements may have the potential to assist extra prospects speed up their sustainability objectives.

Working in tandem with engineering groups throughout Cisco, Denise Lee leads Cisco’s Engineering Sustainability Workplace with a mission to ship extra sustainable merchandise and options to our prospects and companions. With electrical energy utilization from knowledge facilities, AI, and the cryptocurrency sector probably doubling by 2026, in response to a latest Worldwide Vitality Company report, we’re at a pivotal second the place AI, knowledge facilities, and vitality effectivity should come collectively. AI knowledge heart ecosystems should be designed with sustainability in thoughts. Denise outlined the techniques design considering that highlights the alternatives for knowledge heart vitality effectivity throughout efficiency, cooling, and energy in her latest weblog, Reimagine Your Knowledge Middle for Accountable AI Deployments.

Recognition for Cisco’s efforts have already begun. Cisco’s UCS X-series has obtained the Sustainable Product of the 12 months by SEAL Awards and an Vitality Star ranking from the U.S. Environmental Safety Company. And Cisco continues to give attention to vital options in our portfolio by means of settlement on product sustainability necessities to deal with the calls for on knowledge facilities within the years forward.

Stay up for Cisco Stay

We’re simply a few months away from Cisco Stay US, our premier buyer occasion and showcase for the numerous completely different and thrilling improvements from Cisco and our expertise and answer companions. We will likely be sharing many thrilling Cisco Compute options for AI and different makes use of circumstances. Our Sustainability Zone will function a digital tour by means of a modernized Cisco knowledge heart the place you possibly can find out about Cisco compute applied sciences and their sustainability advantages. I’ll share extra particulars in my subsequent weblog nearer to the occasion.

Learn extra about Cisco’s AI technique with the opposite blogs on this three-part collection on AI for Networking:

Share: